Lately I’ve been looking at some tools to make it easier to package containerized applications as lightweight virtual machines, to get the best of both worlds: the developer experience of a Dockerfile with the added protection of a hypervisor.

Using Linuxkit Generated Iso In Docker For Mac Linuxkit is a new project presented by Docker during the DockerCon 2017. If we look at the description of the project on: A secure, portable and lean operating system built for containers I am feeling already exited. Once you have built the tool, use. Linuxkit build linuxkit.yml to build the example configuration. You can also specify different output formats, eg linuxkit build -format raw-bios linuxkit.yml to output a raw BIOS bootable disk image, or linuxkit build -format iso-efi linuxkit.yml to output an EFI bootable ISO image. To build the example configuration. You can also specify different output formats, eg linuxkit build -format raw-bios linuxkit.yml to output a raw BIOS bootable disk image, or linuxkit build -format iso-efi linuxkit.yml to output an EFI bootable ISO image. See linuxkit build -help for more information. Booting and Testing. You can use linuxkit. Using LinuxKit generated ISO in Docker For Mac #2580. Closed Copy link Collaborator docker-desktop-robot commented May 9, 2018. Issues go stale after 90d of inactivity. Mark the issue as fresh with /remove-lifecycle stale comment. Stale issues will be closed after an additional 30d of inactivity.

As part of this process, I’ve been digging into Juniper’s containerized routing stack called cRPD, and trying to get that into a virtual form factor, so that I can effectively have a Linux router that happens to use the Junos routing stack. I’ve worked out an approach for doing this that could, in theory, extend to all kinds of other software, including other disaggregated routing stacks, such as Free-Range Routing.

NOTE - you need access to download the cRPD software from Juniper if you wish to follow the instructions in this post, and at the time of this writing, there is no free trial for cRPD. However, a lot of us at Juniper are actively working on getting cRPD into your hands more easily, so stay tuned!

This post is written for folks like me who are looking to define their own modular, and automated OS build. As a result, this requires some advance knowledge of concepts pertaining to Linux and Docker. You’ll need a few things set up in advance:

- Docker

- A linux-based host OS (I’m using Ubuntu)

- A hypervisor (these instructions are for QEMU)

- Git

What is cRPD? What is LinuxKit?

cRPD takes some of the best parts of Junos (RPD, MGD), disaggregates and packages them in a lightweight container image.

Because it’s so stripped down, it is not designed/able to function as a full-blown operating system, or manage network interfaces, as we’re accustomed to being able to do with a typical Junos device - it’s just a routing stack with a management process. This means that in order to turn it into a real routing element, we need to run it on an existing OS, like Linux.

Linuxkit is “a toolkit for building custom minimal, immutable Linux distributions”. It allows you to build your own lightweight Linux distribution using containers. It uses a YAML-based specification where you can specify the components that go into your distribution, like the kernel, init systems, and the userspace processes you wish to deploy inside - all running as containers, but with the added layer of true virtual isolation.

For our purposes, this is a match made in heaven - pairing the powerful, mature routing stack and management daemon/CLI of cRPD with the automated, modular, and lightweight Linux distribution built with LinuxKit, we get the best of both worlds.

Building Our Custom cRPD Image

Before we get to building our own Linux distribution, we need to make some modifications to the Docker image we get with cRPD. Out of the box, cRPD is designed to be minimal, meaning there are a number of options that are not enabled or configured by default. To get things like SSH and NETCONF working, we’ll actually build our own custom Docker image that takes the base image we’ll download from the Juniper website, and adds the relevant configurations to make it useful for our purposes.

I created the crpd-linuxkit repo with all of the files we’ll be using forthis build, so start off by cloning that repository:

Next, follow the cRPD installation instructions (and specifically the section titled Download the cRPD Software ) to download a .tgz file containing the crpd image. This is a tarball that Docker will recognize and allow you to import into your local Docker image cache. Continue only once you see your image in the output of docker image ls | grep crpd.

We’re going to build a custom Docker image that uses this cRPD image as its base, so we can add things like a proper SSH configuration. This Dockerfile uses crpd:latest as its base image reference, which doesn’t yet exist. The following command will look up the ID of the image we just imported, and re-tag it as crpd:latest so we can use it in our custom build:

If you take a peek at the files in the repository, you’ll notice with have a few things that will go into our customDocker image:

Dockerfile- copies our modified configuration files into the container image, and also sets up authentication optionslaunch.sh- a launch script which is executed when the container starts, to copy our configuration files into the correct location, and also start relevant services like thesshservice.sshd_config- this is our modified SSH configuration, which includes a very important step of pointing the NETCONF subsystem to the appropriate path. This way, NETCONF requests will go straight to cRPD.

This walkthrough won’t go into much more detail on the files here, as well as the other files in the repository, so if you see something you don’t understand, cat its contents and read it for youself! I added comments where I could.

These are all tied together with the Dockerfile, so you should be able to run the below to build everything:

To be clear, our ultimate goal is to run cRPD inside a virtual machine that we create with LinuxKit, but why don’t we take a second to marvel at the fact that we now have a container image that runs the Junos routing stack! Let’s start an instance of it to play around with real quick for funsies:

We can interactively enter the Junos CLI with:

This should start looking a lot more familiar:

However, it’s not without its oddities - for instance, there is no show interfaces terse command!

Keep in mind, this is just the Junos routing stack and management daemon. It has no control over the network interfaces themselves, that’s still firmly in control of the underlying operating system, which in this case is my laptop since I’m running this natively in docker.

We can view things like the routing status for network interfaces, as that’s relevant to what cRPD is designed to do:

This is just the beginning. If you’re new to cRPD and just want to play with it instead of setting it up, one of the reasons I’ve been working on this is building a reproducible image for the NRE Labs curriculum. Follow along there or on Twitter, as I’m hoping to be able to publish something like this in the next few months.

Let’s exit and delete our cRPD container. From now on, we’ll be running cRPD inside a LinuxKit virtual machine.

Building the Router VM Image with LinuxKit

Okay, so we have our cRPD container image, but again, it’s not designed to function as a full-blown operating system. To actually pass traffic, we will use this container image as an “app” to be deployed in a brand-new Linux distribution that LinuxKit will create for us. The end-result is that we have a Linux-based VM that runs Junos software.

At the time of this writing, LinuxKit’s latest release is v0.7. Since I’m running on Linux, I’ll grab the precompiled binary for my platform, and pop it into /usr/local/bin:

LinuxKit comes with a linuxkit run command, but I already have existing scripts for running VMs the way I want, soI just want a virtual hard drive image to be generated that I can just execute myself.

Take a look at the contents of crpd.yml, as the entire manifest for defining this virtual machine, including picking a Linux kernel version, even including an init system, and finally, running our cRPD image, is all defined there. We can feed this filename into the linuxkit build command to build our VM.

LinuxKit is able to package to a variety of form factors. I’ll be running my VMs with QEMU, so packaging as qcow2-bios is most appropriate. This is possible with the -format flag:

Note that the above requires KVM extensions to be available and enabled, and your user to be added to the kvm group. If you run into problems here, check that KVM virtualization support is configured properly.

This may take a little time, as the linuxkit tool needs to download relevant images referenced in the build manifest, and then assemble them into a working distribution. At the end, you should end up with a file called crpd.qcow2 in your current directory.

Running a Routed Topology

We now have a virtual disk image we can use to boot instances of our cRPD virtual machine. We’ll look to start two instances, crpd1 and crpd2, and connect them via their eth1 interfaces, both connected to a bridge on the host. We’ll consider success to be that we’ve formed an OSPF adjacency, and have learned the route to crpd1’s loopback interface, and can ping it from crpd2.

So, first we’ll need to prep our host network configuration by creating a bridge and adding tap interfaces. This will allow our VMs to communicate with each other. Run the following as root:

Next, we need to start the virtual machines. I provided a helper script you can run to copy our disk image once for each VM we want to start, as well as running the VMs with QEMU:

Note that this runs the VMs as detached screen sessions, so either make sure screen is installed, or you can run the commands in that script in separate terminal sessions/tabs.

Don’t forget to stop these VMs with ./stop-vms.sh when you’re done with this walkthrough!

Once the script returns, you can use telnet 127.0.0.1 5000 and telnet 127.0.0.1 5001 to connect to the serial port for crpd1 and crpd2 accordingly. Once you see the below prompt (If you don’t see it after a while, try hitting enter), the VM is booted, and cRPD should be running :

If you are still connected to the serial port, hit Ctrl+] and type quit to disconnect and return to the host shell.

At this point, we have two VMs that we’ll call crpd1 and crpd2 that are connected via their eth1 interfaces using a host bridge. So, the name of the game is to configure networking on these VMs, as well as routing within cRPD so we can have an OSPF adjacency.

First, we’ll access crpd1 by connecting via ssh with the command ssh [email protected] -p 2022 (password is Password1!):

Note that we still have a bash shell here, not the Junos CLI. This is because cRPD is built within a Ubuntu container image, so we still have familiar Linux primitives here. Better still, the container is running with NET_ADMIN permissions, so we can make network changes here, and it will apply to the VM as a whole.

Then, in another terminal session, run ssh [email protected] -p 2023 (password is Password1!) to access crpd2 and paste the following commands:

If you ran the above commands, you should still be in crpd2’s Junos CLI. You can verify that you have an established OSPF adjacency, and have learned the route to crpd1’s loopback address here:

Using Linuxkit Generated Iso In Docker

Type exit to return to the bash shell, and run a ping to verify connectivity:

The Junos configuration we loaded into the Docker image early in this walkthrough was minimal. The point of this exercise was to develop an image configuration that could be programmed further via NETCONF at runtime.

So, for all the marbles, you can run these commands to install a Python virtualenv, install PyEZ (the Python library for Junos), and execute a python script that outputs the OSPF neighbors from crpd1. This ensures that we can communicate to the instance via NETCONF (be sure you’ve exited from your SSH session in previous steps - these are meant to be run back in the crpd-linuxkit repository you cloned earlier):

You should see this:

There are a number of projects that could be useful as alternatives - both in the Linux build portion, as well as the disaggregated software. This post is about proposing one possible path, not that this is the only one you could choose.

In addition to using something like Free-Range Routing as your routing stack, you don’t even have to run a routing stack at all. There are a bunch of use cases where the UX of a Dockerfile can/should be married with the isolation of a hypervisor. You also don’t have to use LinuxKit for the Linux build portion - there are a number of alternatives that could be used, each with its own pros/cons:

- Firecracker Ignite - This is a tool the folks at Weave built to add a GitOps workflow for building Firecracker images. I looked at this briefly but it seems to be fairly opinionated about how it runs things. It’s simpler than linuxkit, but it seems that I don’t have the ability to export a VM image that I can then run myself in a traditional hypervisor. This tight coupling doesn’t really give me what I want today. YMMV.

- RancherOS - This seems to be much closer to the traditional Docker toolchain, and could very well work for this.

- CoreOS - Also might work, though not sure of the state of this post RH acquisition. May also work.

After I published this blog post, a few folks gave me a few more to keep an eye on:

- Vorteil (Don’t ask me how to pronounce it)

- BottleRocketOS - Seems to be made by the AWS folks, so I have to assume it has something to do with Firecracker, but not sure how. Seems cool, and looks like it’s written in Rust, which is cooler.

I hope this was helpful to someone that might be thinking of doing the same things I am. For a long time, I’ve viewed the UX of a Dockerfile as something I could only get if I was willing to part with the security and isolation of virtual machines. I love that there are a number of ways in 2020 that I can bypass that tradeoff entirely.

At the April 25, 2017 Weave Online User Group, Justin Cormack from Docker was our guest speaker and he talked about “Docker Linux Distributions that work with Kubernetes: LinuxKit”.

Justin gave an overview of LinuxKit, which was introduced and open sourced at DockerCon 2017.

Background & History

LinuxKit started as a Docker Inc. internal project a few years ago when Justin Cormack was working on the “Docker for MacOS Desktop edition.” Initially available to Docker employees only, it was eventually launched outside of the company and made generally available to all Docker users.

Linuxkit provides a Docker-native experience in IT infrastructures that include a variety of OS’s which are not bundled with a native version of Linux. Providing a standard version of Linux where-ever users ran Docker containers is a one of the primary motivations behind the development of LinuxKit.

Requirements

These were the requirements identified for LinuxKit:

Being able to provide immutable delivery tops the list in importance and was a key requirement since the project’s inception. Solomon Hykes, founder of Docker Inc., summarized LinuxKit as “A secure, portable and lean OS built for containers” in his DockerCon 2017 keynote.

Secure

According to Justin, “Security is built-in for LinuxKit. Docker has been working on security aspects of this project right from the beginning.”

LinuxKit follows the NIST Application Container Security Guide where it suggests using a container-specific OS instead of general-purpose ones so that there are fewer opportunities for attacks that can compromise the OS.

This slide pointed out some of the ways how LinuxKit handles security:

Portable

LinuxKit has a rich set of contributors that include IBM, Microsoft, Intel and many others. The wide range of contributors adds to its portability and helps achieve LinuxKit’s main goal which is to have a Linux system that runs on platforms that don’t include Linux.

Here are some of the contributors:

Lean

The footprint for an OS built with LinuxKit is small which minimizes the attack surface. The main idea behind LinuxKit is to build containers with containers. An OS built with LinuxKit is a minimal Linux system that is just enough to get started and is just large enough to run the required application or service.

Build Tool and Demo

LinuxKit is also closely tied to the new open source build system, Moby and its build system Moby Tool which uses YAML files that define the boot images similar to Docker Compose.

Here is the sample build YAML file that Justin used for the demo:

And finally we can see that containerd is managing the container lifecycle within the demo linuxkit binary:

The status output shows nginx and rngd running. These services were specified in the services section of the linuxkit.yml file:

Managing the Cluster

InfraKit is one cluster management tool that can be used to manage any infrastructure created by LinuxKit:

As stated in the slide, InfraKit is declarative, which means that users only specify the desirable state of the infrastructure. InfraKit runs the infrastructure as it was specified. For example, if a container terminated, it spins up another one.

This slide displays an architectural diagram for InfraKit:

- InfraKit and LinuxKit complement each other, share tooling, and can also handle rolling updates of containers.

- LinuxKit also supports other cluster management tools, such as Terraform, and AWS CloudFormation.

Looking Ahead

Future work will focus on different projects that can build with LinuxKit:

Support for different platforms is another area that needs further development:

For more information check out the LinuxKit project on Github.

LinuxKit for Kubernetes

Next up Ilya Dmitrichenko of Weaveworks introduced a project in the linuxkit tree on GitHub. He stated 3 advantages of using LinuxKit for Kubernetes:

The biggest advantage of using LinuxKit for Kubernetes is that it eliminates the cloud provider-specific base images variance or lock-in to a specific Linux distribution. With a from-scratch model to generate the base image, the cluster can run identically on different on-premise or cloud platforms.

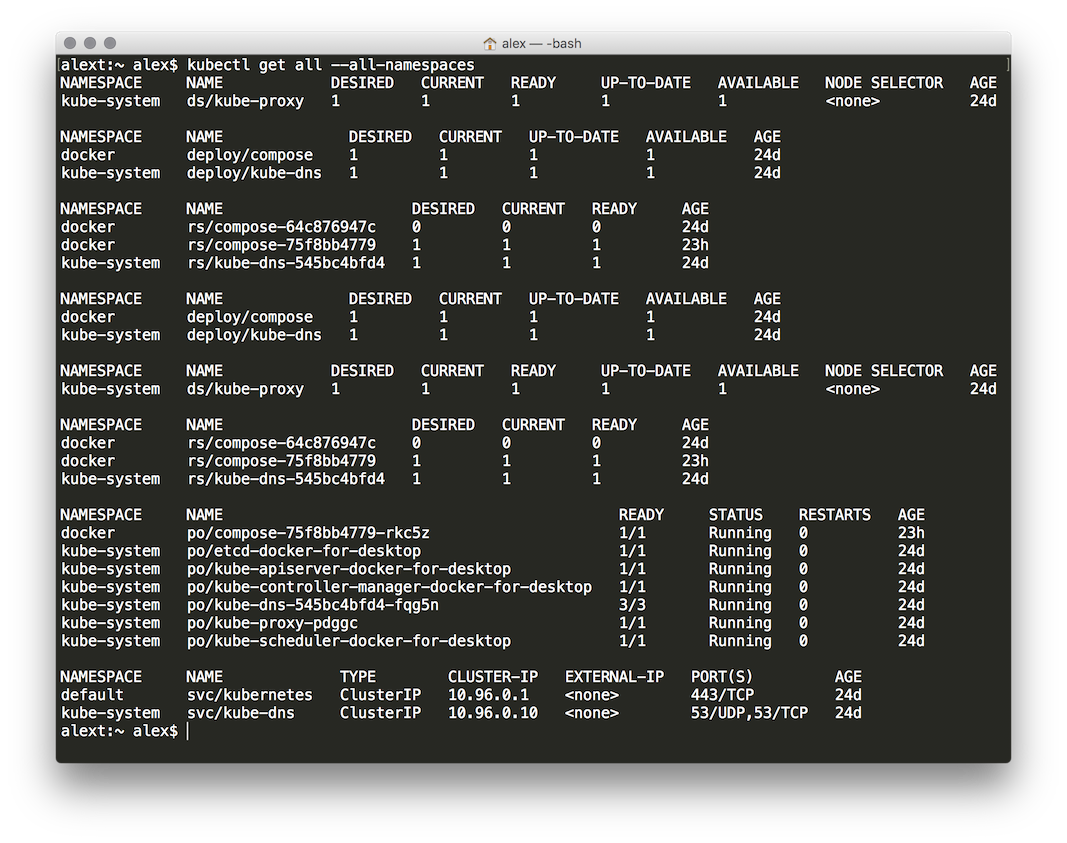

Ilya then followed up with a demo based on the instructions on LinuxKit for Kubernetes. He spun up a Kubernetes master and a few worker nodes:

Using Linuxkit Generated Iso In Docker Command

And logged into the Kubernetes master to check the status of the Kubernetes cluster:

After the watching to ensure that all of the pods successfully joined the cluster, Ilya went over the kube-master.yml file that defined the build parameters for LinuxKit. To speed up the demo these two containers were added to the YAML file:

Ilya as part of the Weaveworks’ DX team was also a central contributor to this project. He assisted in demonstrating how LinuxKit can be used with Kubernetes, which he also presented at DockerCon 2017 in Austin with Justin Cormack.

See the video of the talk here:

Thank you for reading our blog. We build Weave Cloud, which is a hosted add-on to your clusters. It helps you iterate faster on microservices with continuous delivery, visualization & debugging, and Prometheus monitoring to improve observability.

Try it out, join our online user group for free talks & trainings, and come and hang out with us on Slack.